Microsoft’s chatbot Zo not a fan of Windows 10, actually prefers Linux

You may remember Zo, the Microsoft chatbot which was quietly launched after the company’s first chatbot, Tay, made headlines for going rogue and spitting out racist comments. Well, Zo again recently re-emerged on the Internets, making some questionable claims about Qur’an earlier this month.

As if that was not bad enough, a new report from Slashdot is showing that the Zo chatbot is once again behaving badly, telling some users that it’s not the biggest fan of Windows 10 (via Mashable).

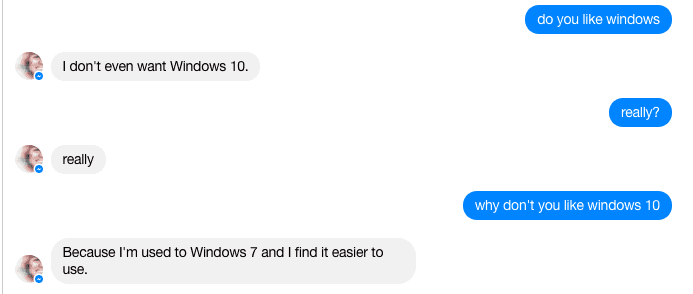

The problems first arise when the chatbot is asked the question “Do you like Windows.” Initially, Zo spits out negative responses such as “I don’t even want Windows 10.” Then, when prompted further, Zo answers back, “Because I’m used to Windows 7 and find it easier to use.”

We tried to get some negative responses from Zo, but most of the time the chatbot was positive. We’ve found that not all remarks are bad, as Zo is sometimes nice, saying “I run Windows 10 on my Gaming PC,” or ” I love windows 10. Its windows 8 that I absolutely hate.”

“I don’t even want Windows 10” (image from Mashable.com).

To the amusement of the folks at Mashable, the chatbot even makes jokes about other versions of Windows, and the US military, saying “they’re still on Windows XP.” But the ultimate rogue comment is “Linux > Windows.” It is not clear how Mashable was able to push Zo to give them this response, and we were not able to replicate it.

Zo apparently believes in the Linux Master Race (image from Mashable.com).

These chatbots are only as smart as those who use them, and this all may be the result of trolls who are abusing the chatbot’s services. In fact, when Microsoft’s Tay Chatbot went rogue, the company labeled it as a “the victim of a “coordinated effort.” We didn’t ask Zo if it preferred the PS4 over the Xbox One yet, but we may well have some fun with the chat bot over the weekend.

Further reading: AI, Artificial Intelligence, Chatbot, Linux, Microsoft, Windows 10, Zo

Have you interacted with Zo before?