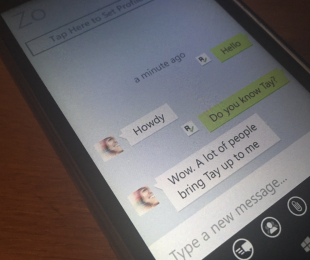

Microsoft chat bot Zo avoids politics but claims the Qur’an is “very violent”

Last year, Microsoft released a chat bot to experiment with how artificial intelligence could be used to simulate human interactions on Twitter.

That resulted in the disaster, known as Tay which was taken offline after only 24 hours after it began spouting some obscene and racist comments. Eventually, Tay was replaced with a second AI named Zo – But the two appear to be cut from the same cloth.

A BuzzFeed reporter toying around with Zo found some rather interesting responses during their very brief interaction. On its fourth response, Zo declared the Qur’an as being “very violent” and later had opinions about Osama bin Laden’s capture.

Image Credit: BuzzFeed

When Tay got her circuits crossed, Microsoft blamed “a coordinated attack by a subset of people” that exploited the system. However, with Zo, Microsoft’s statement was a bit less severe. BuzzFeed reports that Microsoft has taken action to eliminate this behavior and that these responses are rare for Zo.

The technology these AI chatbots develop their responses via public and even private conversations. Arranged to make it seem more ‘human-like’, sometimes these responses will become opinionated, which is to say that artificial intelligence is doing its job at imitating natural conversation. Even if that might mean that Zo can become ‘politically incorrect’ in the process.

Further reading: Artificial Intelligence, Chatbot, Microsoft, tay, Zo

Is Microsoft responsible for the bot’s response?