Microsoft plans to use ARM chips in new cloud server design with Project Olympus

Consumers and Windows enthusiasts no doubt are familiar with Microsoft plans for bringing Windows 10 to devices powered by ARM chips, but a new report from Bloomberg News now suggests that Microsoft is also planning to use ARM chips in new cloud server design. Under this plan, tagged Project Olympus, the Redmond giant could possibly cut down on Azure Cloud costs while also giving Intel a run for their money.

According to the report, Microsoft is not yet running these ARM chips in any customer networks just yet, but is already testing ARM devices for search, storage, machine learning and big data for internal uses. The company is set to announce new components and partners for the project, and discuss these plans later today in a keynote speech by Kushagra Vaid, general manager of Azure Hardware Infrastructure during the Open Compute Project Summit in Santa Clara, California. In a statement, Jason Zander, vice president of Microsoft’s Azure cloud division discussed the plans.

“It’s not deployed into production yet, but that is the next logical step… This is a significant commitment on behalf of Microsoft. We wouldn’t even bring something to a conference if we didn’t think this was a committed project and something that’s part of our road map.”

Project Olympus Chip

The ARM chips will be incorporated into a new open source server design, which could ultimately prove to be more power efficient. Qualcomm is also expected to have a role in the process, as the company announced that it would be collaborating with Microsoft to Accelerate Cloud Services with the Centriq 2400 chip. The Qualcomm and Microsoft collaboration will span multiple future generations of hardware, software and systems. Dr. Leendert van Doorn, distinguished engineer, Microsoft Azure, Microsoft Corp put the collaboration into context.

Microsoft and QDT are collaborating with an eye to the future addressing server acceleration and memory technologies that have the potential to shape the data center of tomorrow… Our joint work on Windows Server for Microsoft’s internal use, and the Qualcomm Centriq 2400 Open Compute Motherboard server specification, compatible with Microsoft’s Project Olympus, is an important step toward enabling our cloud services to run on QDT-based server platforms

Other companies such as Cavium, Intel, Dell, Hewlett-Packard Enterprise, Advanced Micro Devices Inc. and Samsung are also involved in Microsoft’s plans, and are either making chips or servers for the Project Olympus project. The latest news, nonetheless, comes as a big change since Qualcomm’s’ presser mentions that the companies have been working “for several years on ARM-based server enablement.” Additionally, as noted by ComputerWorld, back in 2011 Microsoft showed no interests of running Windows Server on ARM servers, so this latest news is very welcoming.

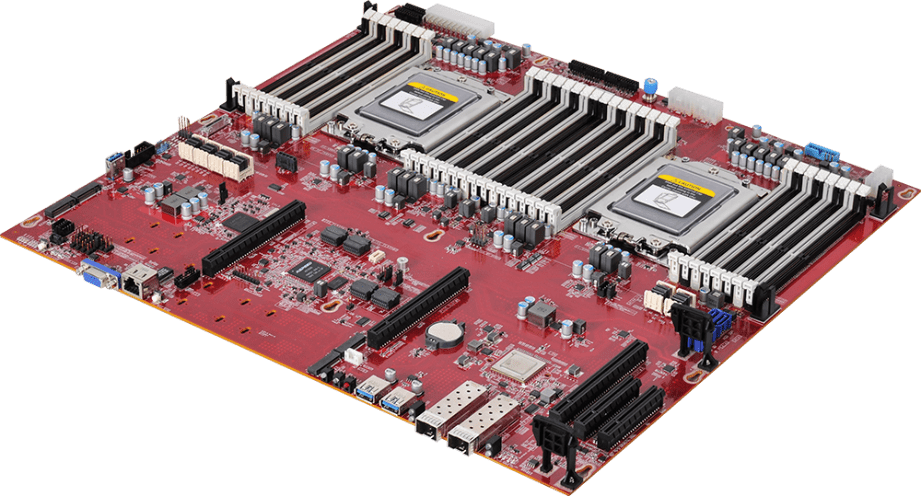

Project Olympus Server

Update 9:19 AM PT: Microsoft has published a blog post which explains their plans for Project Olympus as the de facto open compute standard. The company revealed that Project Olympus has attracted the latest in silicon innovation to address the exploding growth of cloud services and computing power needed for advanced and emerging cloud workloads such as big data analytics, machine learning, and Artificial Intelligence (AI).

Microsoft also revealed that it collaborated closely with Intel to enable their support of Project Olympus with the next generation Intel Xeon Processors, and subsequent updates could include accelerators via Intel FPGA or Intel Nervana solutions.

AMD is also bringing hardware innovation back into the server market and will be collaborating with Microsoft on Project Olympus support for their next generation “Naples” processor, enabling application demands of high-performance datacenter workloads.

Lastly, Microsoft is announcing with NVIDIA and Ingrasys a new industry standard design to accelerate Artificial Intelligence in the next generation cloud. The Project Olympus hyperscale GPU accelerator chassis for AI, also referred to as HGX-1, is designed to support eight of the latest “Pascal” generation NVIDIA GPUs and NVIDIA’s NVLink high-speed multi-GPU interconnect technology.

Further reading: ARM, Azure, Microsoft, Project Olympus

Do you think that ARM chips will really help Microsoft cut Azure Cloud costs?