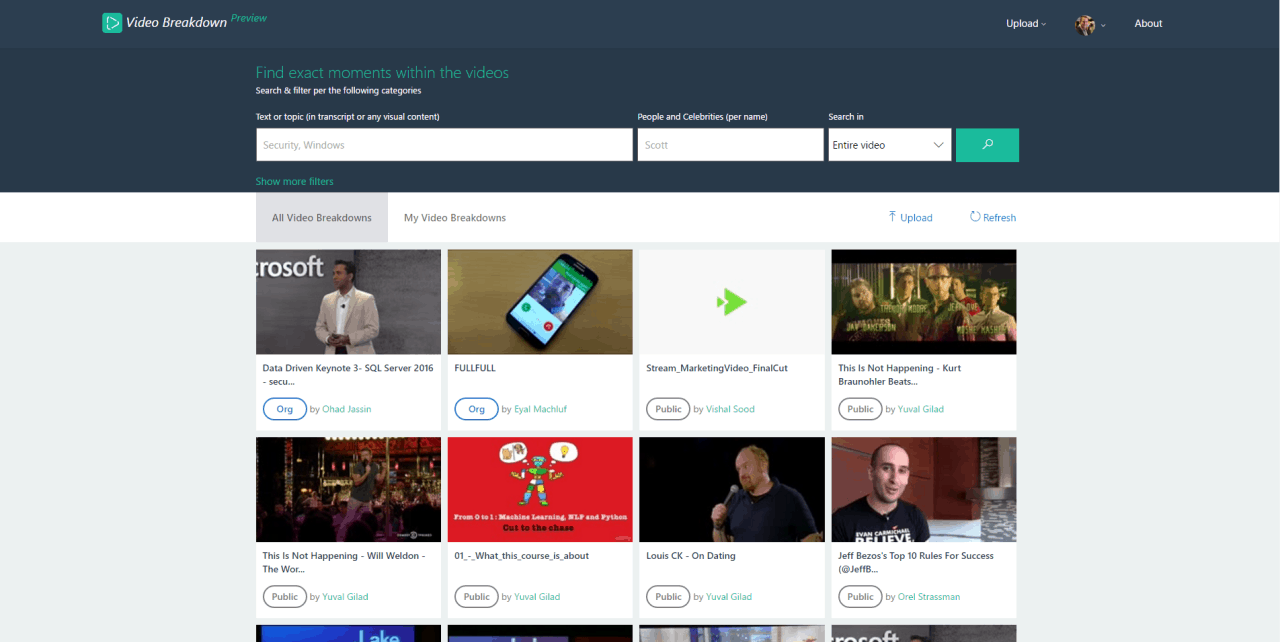

Video Breakdown, a new video platform from the Microsoft Garage, allows you to search for videos on a specific topic and help you find the best segments of a video to use in a presentation or other project. Previously released as a preview, Video Breakdown is now ready for primetime.

Head of ILDC Incubations, Ohad Jassin, led the Microsoft team that created Video Breakdown. Based in Israel, ILDC Incubations is contracted to help find new technologies for Microsoft. Currently, ILDC Incubations is exploring new Microsoft projects at the Microsoft Development Center (MDC). Jassin explains how Video Breakdown sets itself apart from other video platforms:

“Other video platforms, they differentiate between content consumers and producers. You’re either watching or creating. With Video Breakdown, we’re blurring the lines between content producers and consumers.”

Video Breakdown uses a series of Microsoft services and APIs to find insights from the uploaded video content. For example, once you upload a video file to Video Breakdown, Microsoft Cognitive Services and Azure Media Analytics analyzes the file with help from other Azure-powered services. From there, Microsoft breaks down the video file into an audio transcript, face tracking, grouping, and identification, differentiation of speakers, optical character recognition. Video Breakdown also extracts pertinent topics and subject matter from the video file.

Find exact moments within the videos

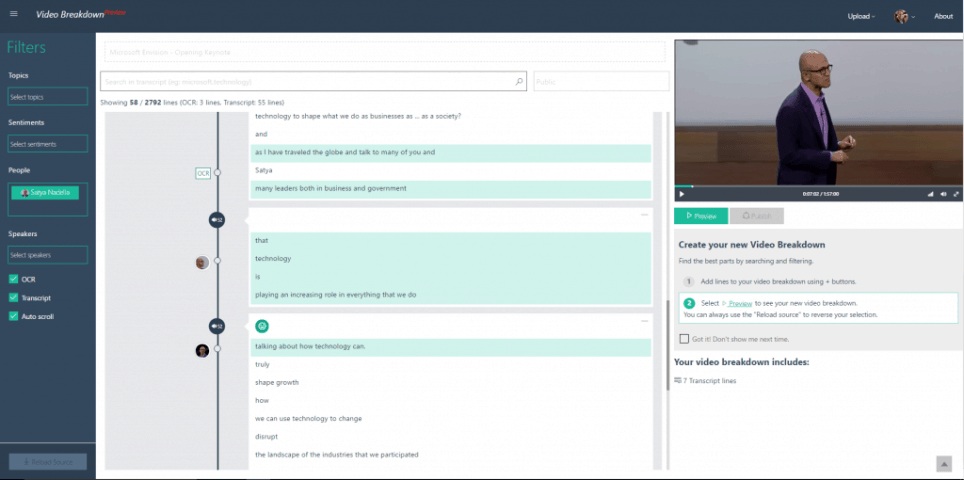

Microsoft takes all of this information to create an index of the video file, allowing the video to be searched by specific topics. Trying to search through an hours-long keynote for a specific topic can be incredibly time-consuming. Video Breakdown allows you to search through the video by specific topics. For example, you can find the exact moment in a video when a speaker addresses a specific topic (here is a Video Breakdown example).

In order to achieve this search capability, Video Breakdown uses many different types of recognition, including computer vision, speech-to-text, language understanding, linguistic analysis, text analytics, face tracking, identification, and image search. The user can see references to specific topics and jump points to use to create a seamless video experience.

Video Breakdown snippet search

Video Breakdown also offers something unique from other video platforms. Jassin offers a more thorough explanation.

“Most videos are tagged by manual curation, which is error prone, inaccurate and usually involves a level of granularity focusing on the entire video. We wanted to do that automatically. Video Breakdown gets linguistic transcripts from audio, detects faces and identifies them – assuming they’re part of top recognized faces, such as celebrities. It’ll tell you who spoke when. We’re innovating a lot of experiences here. We recreated the search experience, video editing, video playing and how they’re shared. There’s nothing to compare it to. So there’s a lot of learning we have to do along the way. We wanted to go publicly and get feedback. Those abilities of video indexing are highly relevant, but we want to make sure it’s battle proven. The Garage allows us to safely experiment with that approach and technology, on a real scale, in a meaningful manner.”

Give Video Breakdown a chance and take a look at the other apps available from the Microsoft Garage.

Further reading: Azure, Azure Media Analytics, Microsoft, Microsoft Cognitive Services, Microsoft Garage, Video breakdown